For three years, the AI conversation has been about which model is smartest. That conversation is closing. Foundation models are converging on capability and diverging on price. A different question has taken over. What surrounds the model decides almost everything the user gets: the agent loop, the tools it can call, the skills it knows, the memory it carries, the permissions governing what it touches, the orchestration that stitches it all together. That layer has a name. It is the harness.

Example Harnesses

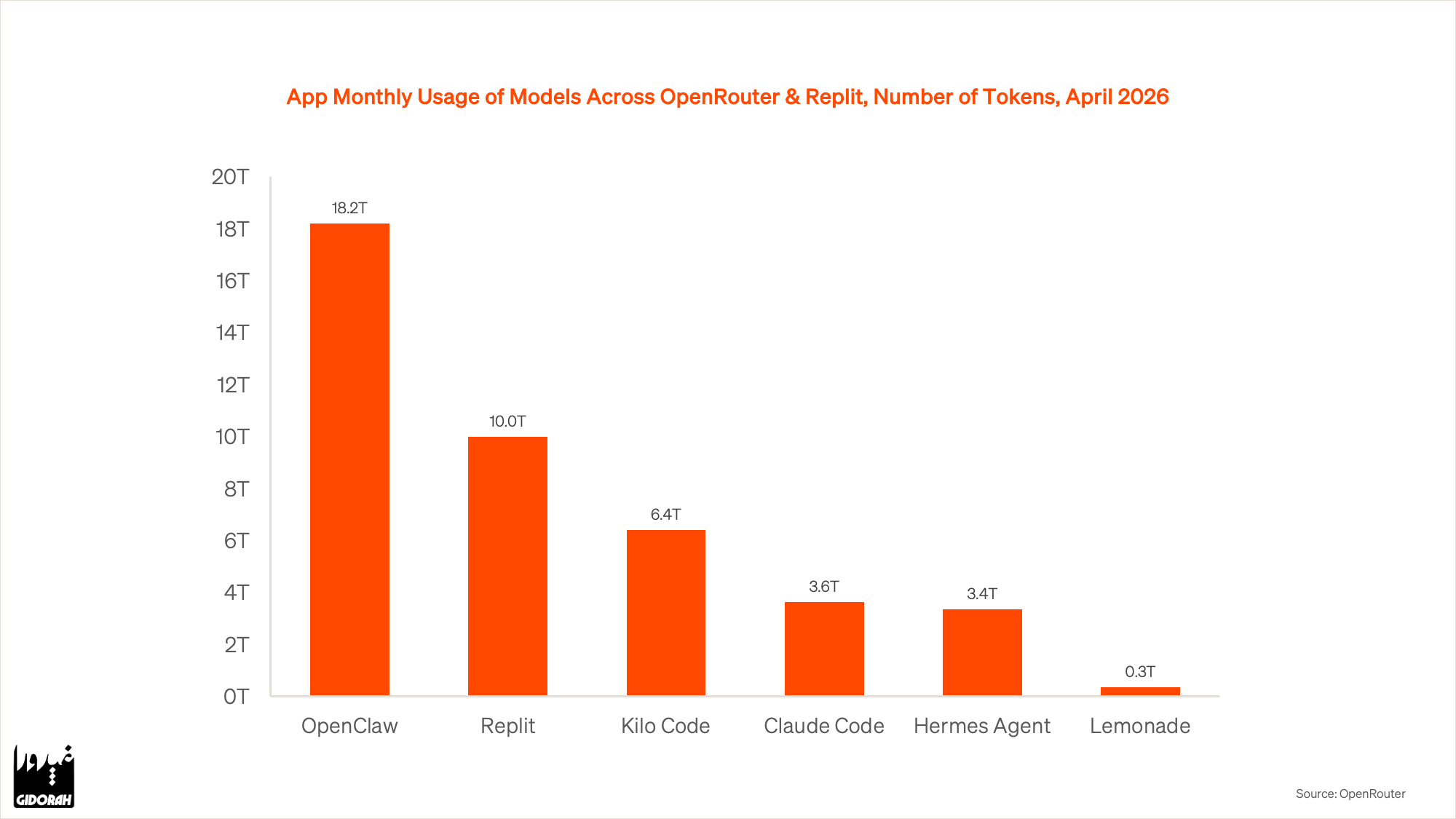

There are already dozens of harnesses in market. Four of the most-used stake out the design space.

Claude Code is Anthropic's first-party harness. Developer-centric, built around code, shell, and file operations. It is how most engineers first encounter the pattern.

OpenCLAW is open-source, MIT-licensed, self-hosted. A gateway that plugs agents into Discord, Slack, Telegram, WhatsApp, and iMessage, so the agent lives where you already talk.

Hermes, from Nous Research, is autonomous and self-improving. It creates its own skills from experience, carries persistent memory across sessions, and runs across local, Docker, SSH, and cloud backends.

Replit is a cloud-native harness for building and shipping apps. Browser-based, with hosting, databases, and auth built in. Describe what you want and the result runs at a URL a few minutes later.

Same model class underneath. Four very different products on top. And the field is growing.

Skills and Memory

Two components inside the harness do most of the heavy lifting: skills and memory.

Skills are procedural knowledge. Reusable instructions that tell the agent how to do a recurring job. Write a research memo this way. Review a pull request that way. Triage email using these rules. A skill captures the craft.

Memory is contextual knowledge. Who the user is, what matters to them, what has already been tried, what has failed. Without memory, every session is a cold start. With it, the agent compounds: this week's work builds on last week's.

An agent without skills is a clever stranger. An agent without memory is an amnesiac. An agent with both, inside a harness, starts to feel like a colleague who has been on the job for a year. That gap, not the model's IQ, is what the user feels.

The Memory Problem

Memory has a catch. An agent's context window is finite. Even a million-token window fills within a week of real work. Emails, drafts, notes, and tool outputs add up fast.

Cramming everything in does not work. Researchers tested 18 frontier models and every one got worse as input grew. The industry calls it context rot. Past a threshold, the model stops paying attention to most of what is in front of it.

The fix mirrors how humans work. You do not keep every past conversation running in your head. You carry a summary and retrieve details when you need them.

A good harness does the same. A short summary stays in context at all times: who the user is, what they care about, what they are working on. The long record lives in files outside the context. When the agent needs a specific fact, it searches by meaning, not keywords. Ask "what did I decide about enterprise pricing last month" and the right notes surface even if they never used the word pricing.

Without the summary, the agent forgets who you are. Without search, it drowns. A working harness has both.

Three Tiers of Harness

The harness stack extends across three tiers, each with a different buyer and different economics.

At the individual tier, the harness is a personal chief of staff. Email, calendar, writing, research, coding. One operator, one harness, a 2–10x multiplier on output. Willingness to pay: tens to hundreds of dollars a month.

At the SMB tier, the harness is the ops team of one. Bookkeeping, support, outbound, scheduling. Built once, run forever, replacing a role that costs tens of thousands a year. Willingness to pay: thousands a month.

At the enterprise tier, the harness is the workflow platform. Compliance review, underwriting, legal diligence, code review, wrapped in audit logs, permissions, and custom skills that encode house rules. Willingness to pay: millions a year.

Stacked up, the TAM of the harness layer is every knowledge worker's salary line. Measured in trillions, not billions. That is where the value is accruing.

A Practice, Not a Product

Harnessing is a practice, not a product. The five steps are the same whether you are a solo operator or a Fortune 500.

- Define the outcome, not the task. What does "done" look like?

- Give it tools — the things a human on the job would reach for. Email, files, browser, code, database.

- Write skills for the procedures you repeat. Every recurring workflow is a skill waiting to be captured.

- Give it memory. Who you are, what you have decided, what went wrong before.

- Close the loop. Verify, correct, and feed the correction back in. The agent improves only when the loop closes.

Do this once and the agent is a toy. Do it for a quarter and it is an asset. Do it for a year and it is a moat.

Models get cheaper and more interchangeable every quarter. But harnesses accumulate skills, memory, integrations, and trust, and they get stickier every quarter.

Which model you use is the easy question. Which harness you own is the one that compounds. Whoever owns the harness owns the relationship with the user. That is where AI value is actually accruing.